Apple’s Gatekeeper Distribution Power

Apple’s real posture on AI isn’t “buying Google’s model” — it’s using its gatekeeper distribution power (Safari + App Store + Apple Intelligence routing) to construct a set of multi-vendor procurement contracts: OpenAI gets paid in distribution ($0), Google gets $1B/yr (starting 2026-04), Anthropic was rejected for asking too much, the China market splits between Alibaba and Baidu, and training compute is separately rented from Google TPU (est. $0.1–0.5B/yr). In parallel, Google still pays Apple ~$20B/yr for Safari default search — net all the cash flows together and Apple’s “AI upstream” is still net inflow ~+$18–19B / yr. This research is organized around five observations: (1) the multi-vendor graph and reverse cash flow; (2) Mac is the only consumer-grade device that simultaneously holds “silicon + macOS + your daily data” as the household compute + data hub; (3) macOS is currently the best vehicle for Computer Use; (4) Apple’s 2026 capex is just $14B, an order-of-magnitude gap vs the five hyperscalers’ combined $660B+; (5) smart glasses launching 2027 may become the next AirPods-class entry-point category.

Reverse Payments — $1B in, $20B out

Image: Wikimedia Commons (Apple Inc. screenshot).

The common simplified narrative is “Apple pays Google $1B to use Gemini.” The reality is far more complex: on the AI line Apple simultaneously maintains 5 third-party vendors + 2 in-house layers + 1 training-compute vendor, and the vast majority of these relationships involve no cash outflow. The table below is the full 2026 picture of this stack.

2026 Apple AI Vendor Stack · All Known Relationships

Data sources: CNBC, Bloomberg / Mark Gurman, Apple ML Tech Report, 9to5Mac, AppleInsider, official press releases; USD annualized.

| Layer | Vendor | Model / Use | Start / Status | Annualized Pay (Apple POV) |

|---|---|---|---|---|

| On-device | Apple in-house | AFM-on-device · ~3B · 2-bit QAT + LoRA | iOS 18.1 · 2024-10 | Internal R&D · no external pay |

| Cloud | Apple PCC | AFM-server · PT-MoE running on Apple’s own M-silicon data centers | iOS 18.1 · 2024-10 | Internal capex · no external pay |

| Frontier · Global | OpenAI · ChatGPT | GPT-4o → GPT-5 · general Q&A / writing / vision | iOS 18.1 · 2024-10 | $0 · distribution for brand exposure |

| Frontier · Global | Google · Gemini | 1.2T-parameter custom · new Siri semantic layer | iOS 26.4 · 2026-04 | ~$1B / yr · multi-year cumulative ~$5B |

| Frontier · Global | Anthropic · Claude | Candidate for Siri rebuild (rejected) · Xcode 26.3 agentic coding | Siri talks broke down · Xcode 2026-02 | Rejected (Anthropic demanded “several B/yr”) · Xcode usage-billed |

| Frontier · China | Alibaba · Qwen | Tongyi Qianwen + content moderation layer (primary for China Apple Intelligence) | 2025-05 · regulatory approval cleared | Undisclosed · estimated revenue-share / minimal cash |

| Frontier · China | Baidu · Ernie / Wenxin | Partial features (first-gen partner · stepped back after privacy issues) | 2024 onward | Undisclosed |

| Training compute | Google Cloud · TPU | AFM training: v4 ×8,192 + v5p ×2,048 | 2024 to present | $64K/hour billed · est. $0.1–0.5B / yr |

| Reverse inflow | Google → Apple | Safari default search TAC | 2002 to present · 18 years | +$20B / yr |

The OpenAI relationship is a non-cash barter — “distribution for brand” — neither side pays, but OpenAI benefits via ChatGPT Plus conversions. When Anthropic Claude negotiated for Siri, it asked “billions/year” and was rejected by Apple; in parallel, Claude has already entered the Apple developer ecosystem via Xcode 26.3. The China market requires using local models to obtain generative AI regulatory approval, and Apple chose Alibaba as primary, Baidu as secondary.

Aggregating All Cash Flows · Net Inflow ~$18–19B / yr

| Cash flow | Direction | Magnitude | Note |

|---|---|---|---|

| Google → Apple · Safari TAC | +$20B / yr | DOJ antitrust filings | Safari default search rev-share · 18-year relationship |

| Apple → Google · Gemini | −$1.0B / yr | 2026 multi-year | 1.2T custom Gemini · for Siri |

| Apple → Google Cloud · TPU | −$0.1–0.5B / yr | Estimate | AFM training compute · 8,192 v4 + 2,048 v5p · $64K/h on-demand |

| Net inflow · Apple | ~+$18.5–18.9B / yr | ≈ 20 : 1 | OpenAI / Alibaba / Baidu cash flows minimal; Anthropic rejected |

Apple’s real posture on AI isn’t “buying Google’s model” — it’s using gatekeeper distribution power (Safari + App Store + Apple Intelligence routing) to construct a set of multi-vendor procurement contracts: OpenAI paid in distribution, Google gets $1B, Anthropic rejected for asking too much, the China market split between Alibaba and Baidu, and training compute separately rented from Google TPU. The total cost of all this is still far less than Google’s unilateral $20B search rev-share.

— Apple doesn’t have an “AI strategy”; Apple has a set of “frontier model vendors”

The Household Hub — silicon + OS + your data

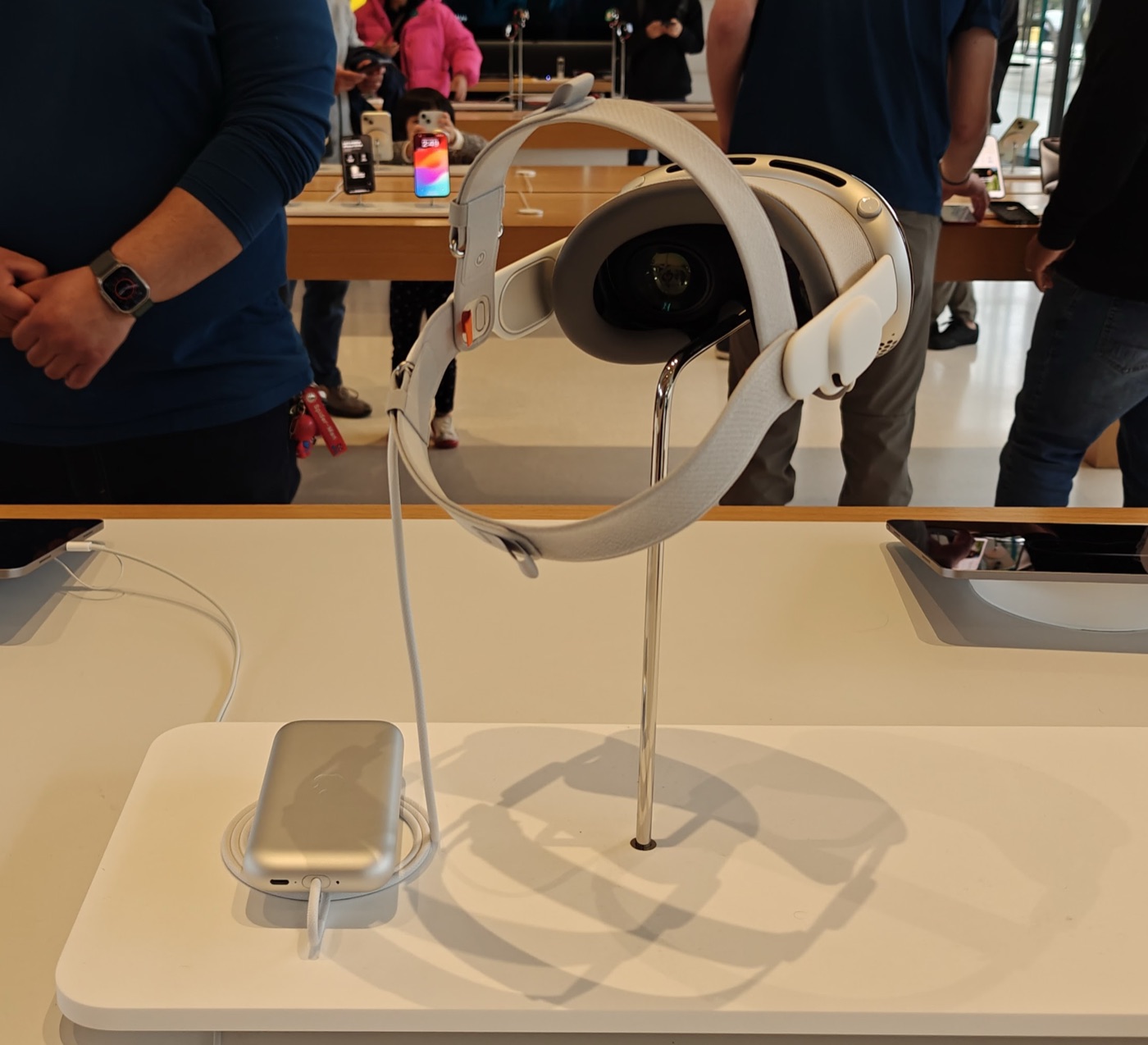

Image: Wikimedia Commons / CC BY-SA 4.0.

Mac’s true position in 2026 isn’t simply “cheap hardware that can run large models” — it is the physical substrate at home that simultaneously holds compute and data. The truly scarce input for AI Agents has never been the model; it’s your daily data: 14 years of family in Photos, 12 years of conversations in Messages, tens of thousands of contracts and attachments in Mail, every calendar entry, every Note draft, every contract in Files, every health trace in Health. Apple has been quietly holding this data for nearly two decades — distributed across roughly 1.4 billion iPhones and ~150 million Macs worldwide, synced via iCloud, with local copies always there.

To put this clearly: in the consumer market, only one player simultaneously holds the five-piece set of “silicon + OS + your daily data + privacy-default architecture + on-device inference framework” — and that’s Apple.

The “Data Substrate” of an Ordinary Household

| Data source | Typical size | Coverage | Note |

|---|---|---|---|

| Photos library | 100–500 GB | Thousands to tens of thousands of photos | 1TB iCloud ≈ 330K photos · local copy with thumbnails usually ~25% larger |

| Messages history | 10–30 GB | Years of complete conversations | iCloud-synced across all devices · includes attachments / images / voice |

| Mail archive | 5–20 GB | Work + personal | Contracts / invoices / cross-year communications · IMAP fully cached locally |

| Notes / Files / Health | 5–15 GB | Drafts / docs / traces | Apple Notes + iCloud Drive + HealthKit · all end-to-end encrypted |

This dataset is what Google wants, what OpenAI wants, what Meta wants — and none of them can get it without the user actively uploading it — because it lives locally on Apple devices, sandboxed and locked by Secure Enclave. Apple itself, conversely, is the compliant default entry for this data.

— In 2026, the scarcest agent context is your past, not new models

Mac Local Inference Gradient

Reference prices = Apple Store US base configurations · tok/s measured via llama.cpp / MLX, varies by model and quantization.

| Tier | Model · Config | Memory bandwidth | Models that fit | Typical tok/s | Reference price |

|---|---|---|---|---|---|

| Entry | Mac mini M4 · 24GB | 120 GB/s | 7B–13B Q4 | 7B≈60-80 / 13B≈35-50 | $799 |

| Main | Mac mini M4 Pro · 64GB | 273 GB/s | 30B Q4 / 70B Q4 | 30B≈12-18 / 70B≈8-12 | $2,199 |

| Pro | Mac Studio M4 Max · 128GB | 546 GB/s | 70B FP8 smooth | 70B≈20-25 | ~$4,000 |

| Flagship | Mac Studio M3 Ultra · 512GB | 819 GB/s | 600B+ single-machine full-memory | Llama 3.1 405B≈8-12 | $9,499 |

| Extreme | Mac Studio cluster · TB5 (4–8 nodes) | Aggregate bandwidth ~3 TB/s | 1T+ parameters (Kimi K2) | Qwen3 235B ≈32 tok/s | ~$40K |

2026 mainstream Apple Silicon configs · M4 Ultra not yet released, flagship remains M3 Ultra (819 GB/s). Equivalent 70B inference on x86 path requires H100 ($30K+ per card) with 5–10× higher power.

The Four Pillars of “Household Hub”

- Silicon: unified memory + high bandwidth. CPU / GPU / Neural Engine share the same RAM pool — model weights don’t need to be shuttled over PCIe. M4 Pro 273 GB/s, M4 Max 546 GB/s, M3 Ultra 819 GB/s — memory bandwidth previously only seen in data-center GPUs, packaged in a desktop-grade ~30W idle chassis.

- OS: macOS Tahoe (26) is already a competent household server. launchd daemons let an agent run on system boot (before any user login), auto-restarting on crash; Tailscale mesh VPN lets your iPhone / iPad / on-the-go MacBook directly connect to the home Mac mini, with zero exposed ports and zero public IPs. Caffeinate / pmset to prevent sleep is a known engineering problem, not a blocker.

- Data: all your daily data is already on the Mac. Photos / Messages / Mail / Notes / Calendar / Files / Health — Mac has full local copies of all of these (or optional “download complete files”). The agent doesn’t need to upload anything to any cloud to read, index, or do RAG; ollama / mlx embeddings hit the file system directly. This is structural — Google / NVIDIA / Meta and any other player cannot buy this layer.

- Framework: Apple Intelligence + App Intents are the compliant channel. Apple Foundation Model on-device version ~3B parameters + 2-bit QAT + LoRA adapter; server version uses PT-MoE running on Apple’s own M-silicon data centers (Private Cloud Compute, encrypted memory, invisible to anyone). App Intents allow any third-party app to expose functionality, entities, and queries to Siri / Apple Intelligence — since iOS 26.4, Personal Context spans Calendar / Files / Mail / Messages / Notes / Photos, six system domains.

One sentence · Who has all five pieces

The only player with “silicon + OS + data + privacy architecture + on-device inference” all at once is Apple.

- Google: has data (Search / Gmail / Drive / Photos), but no consumer hardware distribution; data lives entirely in the cloud, users don’t control local copies.

- NVIDIA: only has silicon. No consumer OS, no consumer data, no household form factor.

- Meta: has social data + Ray-Ban glasses + Quest, but no consumer OS, no local-inference hardware, and data lives largely in the cloud.

- Microsoft: has enterprise OS + Office data; the Surface consumer side has long been marginal; Copilot+ PCs are still chasing Apple Silicon’s memory bandwidth.

- Apple: M-silicon + macOS + 1.4B-installed-base Photos/Messages/Mail/Notes/Calendar/Health + sandbox / Secure Enclave / Private Cloud Compute + 3B on-device foundation model + App Intents. All five present.

This isn’t a “Mac inference is cheap” story. This is the first time “home compute + home data” has been consolidated by a single ecosystem.

Computer Use — the agent’s preferred OS

Image: Apple via Wikipedia (macOS Sequoia article).

On 2026-04-16, OpenAI pushed its largest update yet to the Codex Mac client — adding Computer Use: Codex can see the screen, control the cursor, and click and type across any macOS app. MacStories’ Federico Viticci’s first-hand review states it bluntly: “the best computer use feature I’ve ever tested.”

In the same week OpenAI also upgraded the underlying model to GPT-5.5: SWE-bench Verified 88.7%, Terminal-Bench 2.0 82.7%, hallucination rate down 60% vs GPT-5.4. In other words, Computer Use as a capability has — for the first time — both “a strong enough model layer” and “a smooth enough system layer.”

Why macOS is the Better CUA System

- Accessibility API battle-tested. macOS Accessibility framework was designed for screen readers, automation, assistive input — VoiceOver, Switch Control, and Dictation all depend on it; the API surface has been stable for twenty years. The cost for an agent to obtain semantic information from the screen is far lower than the pixel + OCR cobbling required on Windows.

- AppleScript / Shortcuts legacy. From AppleScript in 1993 to Shortcuts in 2018, the system-level automation layer has always been there. Codex / Claude Code can let an Agent directly call Shortcuts the user has already written, rather than simulating clicks manually. Windows has no equivalent.

- Developer machines are user machines. Codex / Claude Code / Cursor / Zed — this generation of Agent clients is all Mac-first — not “also supports Mac” but development priority #1. The reason is simple: the engineers writing these tools all use Macs themselves. Heavy-interaction capabilities like Computer Use naturally mature first on macOS.

- Clear single-window model. macOS has a relatively unified window model (one main window per app, global menubar), and Mission Control gives clear visual hierarchy; Windows has MDI / SDI / floating panels / taskbar paradigms coexisting, making UI-tree parsing harder for an agent.

- Local vision encoders are cheap. Running CLIP / SigLIP / GPT-4V-class vision encoders on Apple Silicon is feasible on consumer hardware. The screenshot → element-identification step of Computer Use would have runaway costs if billed purely via cloud vision tokens; Mac runs a large portion of it locally.

- Security model fits. macOS sandbox, Privacy & Security panel, the TCC (Transparency, Consent, Control) framework — the ability to give an Agent explicit authorization for a specific app / directory is system-native. On Windows this requires additional policy plumbing; on Linux you cobble capabilities + AppArmor together.

Computer Use is the “mouse and keyboard” of the Agent era — once stabilized, it means an Agent no longer needs dedicated API integration; any GUI app can be automated. The first system to make this production-ready is macOS, and the strategic implications for Apple are not yet fully priced in by the market.

— Codex × macOS, 2026-04-16 update

The Capex Inversion — $14B vs $660B

Image: Wikimedia Commons / CC BY-SA 3.0.

2026 combined capex of the five hyperscalers lands in the $660–690B range, with ~75% directly tied to AI infrastructure (GPUs, data halls, power) — per Futurum Group, CreditSights, and Trefis estimates. Apple’s same-year capex is $14B (~3.4% of revenue), a gap of more than a full order of magnitude.

2026 capex · same-basis comparison

Amazon $200B leads, Alphabet $180B, Microsoft $120B+, Meta $125B, Oracle $50B follow; Apple $14B is the obvious outlier in this group — just 7% of Amazon.

Why Apple Doesn’t Follow

Outsource the depreciation cycle to someone else.

Data-center depreciation is 5–6 years; Amazon’s $200B capex means $33–40B in new annual depreciation pressing on the income statement; by 2028 the depreciation line item alone eats tens of billions of profit.

Apple chose a different path: $1B/year licensing Gemini. If foundation models continue to commoditize (price wars, open-source catch-up, falling inference costs), Apple is the buyer, with maximum bargaining power; hyperscalers are sellers who have already poured money into concrete, only able to amortize via scale.

This is consistent with Apple’s historical approach to hardware: don’t build panels (OLED purchased from Samsung / LG), don’t build basebands (5G modem purchased from Qualcomm for years), don’t build main NAND (purchased from Kioxia / Micron), and only do system integration + entry-point + brand. The LLM is now in the same drawer.

| Metric | Value | Note |

|---|---|---|

| Apple 2026E capex | $14B | ~3.4% of revenue · supply-chain diversification + moderate AI infrastructure |

| Amazon 2026 capex | $200B | 14× Apple · primarily tied to AWS GPU clusters |

| Five hyperscalers combined | $660–690B | ~$450B AI-direct · 2026 full-year basis |

| Apple Services gross margin | 75.4% | FY25 · doesn’t burn capex, sustaining extreme-margin structure |

The counterintuitive aspect of the AI era: not burning capex is the safer position. Foundation models are commoditizing rapidly; whoever first sinks tens of billions into H100 / Blackwell clusters carries the depreciation. Apple is swapping gateway access for fees, effectively offloading AI-era capacity risk onto Google / Microsoft / Amazon shareholders.

— 2026 capital-cycle inverse pricing

Smart Hardware — ambient computing

Image: Wikimedia Commons / CC BY-SA 4.0.

Image: Wikimedia Commons / CC BY-SA 4.0.

In 2025 Meta Ray-Ban smart glasses shipped 7 million+ units for the full year, capturing 82% of the global smart-glasses market — a number so large that Apple made a reverse decision in October 2025: postpone Vision Air (lightweight headset), redirect engineers to the smart-glasses project, target 2027 launch, with a possible end-of-2026 debut. (Bloomberg / Gurman; Ming-Chi Kuo.)

| Reference point | Units | Note |

|---|---|---|

| Meta Ray-Ban 2025 units | 7.0+ M | 82% global share · near-monopoly outside Anker, category validated |

| Apple smart-glasses target | 3–5 M / yr | 2027 launch basis · Ming-Chi Kuo forecast · multi-frame / multi-material SKUs |

| Apple AR/VR total shipments | 10+ M | 2027E · Vision Pro 2 + Vision Air + smart glasses combined |

| Reference · AirPods first-year shipments | 14 M | 2017 · captured 60%+ category share within 3 years |

Three Time Windows for Apple’s Glasses Roadmap

- Gen 1 · 2027 launch · no display · multi-frame / multi-material. Form factor targets Ray-Ban Meta: camera + microphone + health tracking + Apple chip, depending on iPhone compute. Fashion-accessory route, multiple materials and frames. Repeatedly confirmed by Bloomberg / Gurman.

- Gen 2 · 2028 (originally later → moved up) · with display · direct counter to Meta Ray-Ban Display. Originally planned for post-2030, pulled forward because Meta launched Ray-Ban Display in 2025. With micro-OLED single- or dual-eye HUD, capable of displaying notifications, navigation arrows, and Siri / Gemini visual responses.

- Parallel · Vision Pro 2 · 2026 H2 · M5 Neural Engine + controls upgrade. Headset form factor retained, continuing in the high-ASP segment of professional content / spatial computing / enterprise scenarios. M5 refresh’s Neural Engine strengthens eye-tracking, gesture recognition, and spatial content creation. Vision Pro doesn’t retreat; smart glasses run in parallel.

Gateway Logic

Smart glasses = AirPods moment × 10.

AirPods were mocked as “expensive wireless earbuds” when launched in 2016; within three years, they captured 60%+ category share and contributed over half of Wearables revenue. Smart glasses sit in a similar position — but their gateway properties are stronger:

- Visual input: camera + vision model = “what you’re looking at” becomes Agent context directly. Siri × Gemini becomes meaningful on this hardware.

- Audio output: bone-conduction / micro-speakers + all-day wear = the complete loop of Ambient Computing — iPhone is “pick up to use,” glasses are “always worn.”

- First-party distribution: not working for Meta / Samsung, but Apple’s own hardware + Apple Intelligence + App Store rev-share.

- Installed-base conversion: among 1.4 billion iPhone users, even 5% buying a pair within 3 years means 70 million units — already 10× Meta’s full-year volume.

This layer of hardware + gateway distribution is something Google / OpenAI / Anthropic cannot buy with any amount of capex.

Synthesis — a contrarian position

Image: Wikimedia Commons (Daniel L. Lu) / CC BY-SA 4.0.

Putting the four threads together, Apple’s position in the 2026 AI ecosystem can be described like this.

Five Structural Advantages

- Gateways, not models. App Store + default search + Apple Intelligence routing — three layers of gateway control intact. Foundation models become licensable components; $1B/year access fee is just 1% of $109B Services.

- Household compute + data hub. Mac is simultaneously the household compute resource (M3 Ultra 819 GB/s single-machine running 600B+, Mac mini M4 Pro desktop running 70B) and data substrate (Photos / Messages / Mail / Notes / Health all local). The only player with all five — “silicon + OS + your data + privacy architecture + on-device framework” — is Apple. This isn’t a model story; this is a structural story.

- Best system for Computer Use. Codex / Claude Code / Cursor / Zed all Mac-first; Accessibility + Shortcuts + sandbox model make Agents reliable on macOS — other systems are still chasing.

- No capex arms race. $14B vs $660B+ is an order-of-magnitude gap. Outsource the depreciation pressure to Google / Microsoft / Amazon shareholders, retain buyer bargaining power. Once foundation models commoditize, this position is actually the most comfortable.

- Smart hardware second curve. Smart glasses 2027 + Vision Pro 2 2026 + AirPods continuing. 1.4 billion iPhone installed base + Apple Intelligence + first-party distribution — a hardware + gateway combo no one else can buy.

Bottom Line

Apple’s real advantage in the AI era isn’t “I can also build models” — it’s “I don’t need to build models.”

Everyone else is racing on GPUs, racing on data centers, racing on frontier models. Apple isn’t. What it’s doing is slower but more structural:

- Treat LLMs as procured components like OLED panels / 5G basebands

- Turn the Mac into the household compute + data hub — five pieces (silicon, OS, daily data, privacy, on-device inference) all assembled

- Turn macOS into the system where Computer Use matures first

- Turn smart glasses into the next Ambient Computing gateway

$1B in, $20B out, $14B capex, 1.4 billion installed base — this combination is a rare position in the 2026 AI capital cycle where one can “win without burning.”

The scarcest thing in the AI era is not the model — it is a gateway distribution system that users open every day, installed across 1.4 billion devices, and that regulators and antitrust authorities cannot pry apart. Apple just happens to own that. The rest is patience, and the discipline not to participate in the war.

— Contrarian research · 2026-04-25

References — Siri rebuild · on-device AI · distribution game

Official and Regulatory

- OpenAI × Apple partnership announcement — Siri integration with ChatGPT. openai.com

- Apple ML Research — Apple Intelligence Foundation Language Models technical report. machinelearning.apple.com

- DOJ — US v. Google LLC antitrust case, search-default distribution rev-share figures. justice.gov

Media Tracking

- CNBC · Apple-Google $1B/yr Siri AI deal. cnbc.com

- AppleInsider · Anthropic-Apple talks broke down. appleinsider.com

- Bloomberg / Mark Gurman — long-running column on Apple internal news. bloomberg.com

- 9to5Mac · Siri × Gemini integration analysis. 9to5mac.com

- MacStories / Federico Viticci · OpenAI Codex Mac client first-hand review. macstories.net

- Ming-Chi Kuo — Apple supply chain analysis. medium.com/@mingchikuo

On-Device Inference Tools

- llama.cpp — de facto standard for running local LLMs on Apple Silicon. github.com/ggerganov/llama.cpp

- MLX — open-source ML framework optimized for Apple Silicon. ml-explore.github.io/mlx

- OpenAI Codex Changelog — Mac client Computer Use update. platform.openai.com

Industry Research / Sell-side

- Futurum Group — AI and enterprise IT industry analysis. futurumgroup.com

- CreditSights — credit ratings and fixed-income research. creditsights.com

- Trefis — valuation decomposition and scenario analysis. trefis.com